Why Voice Agents Need a Different Inference Stack

February 9, 2026

February 9, 2026

Chances are, your intelligent voice AI agent gives relevant responses. But every exchange has this half-second pause that makes the whole thing feel broken. The voice agent is shelved.

That pause killed it.

At PolarGrid, we've spent the past year optimizing milliseconds because voice agents that don't match human conversational timing don't get used.

Here's what psycholinguistic research tells us about human conversation.

In a landmark 2009 PNAS study, Stivers et al. analyzed turn-taking across ten languages, from Japanese to Tzeltal to Dutch, and found something remarkable: median response gaps ranged from 0 to 300 milliseconds, with most falling between 0 and 200ms. This pattern held regardless of culture, language structure, or geographic location.

More recent work by Meyer (2023) in the Journal of Cognition confirms this finding: median latencies in conversational speech are consistently reported under 300ms. Furthermore, Levinson and Torreira's research places the pause between speakers at around 200ms, noting that human conversation involves less than 5% simultaneous speech, with or without visual contact.

Our brains evolved to interpret pauses as meaningful signals. At over 300ms, listeners begin anticipating a hedged response. By 600-800ms, we've already concluded something is wrong.

Thus, intelligent voice agents that respond outside 300ms communicate incompetence through silence.

The contact center industry has learned this lesson the hard way.

The industry experience with voice AI deployments shows that response latency beyond a second causes users to talk over the agent, breaking intent recognition and forcing conversation loops. At the one-second mark, customers start hanging up. According to industry reports, abandonment rates increase by 40% compared to sub-second responses.

One second creates a hard ceiling that most voice AI platforms can't reliably hit. Even the faster models struggle to achieve this target because the infrastructure wasn't designed for it.

Standard LLM inference is optimized for throughput and batching. Send a request, queue it with others, maximize GPU utilization, return results. This works brilliantly for email summarization and code generation.

For voice, batching is the enemy.

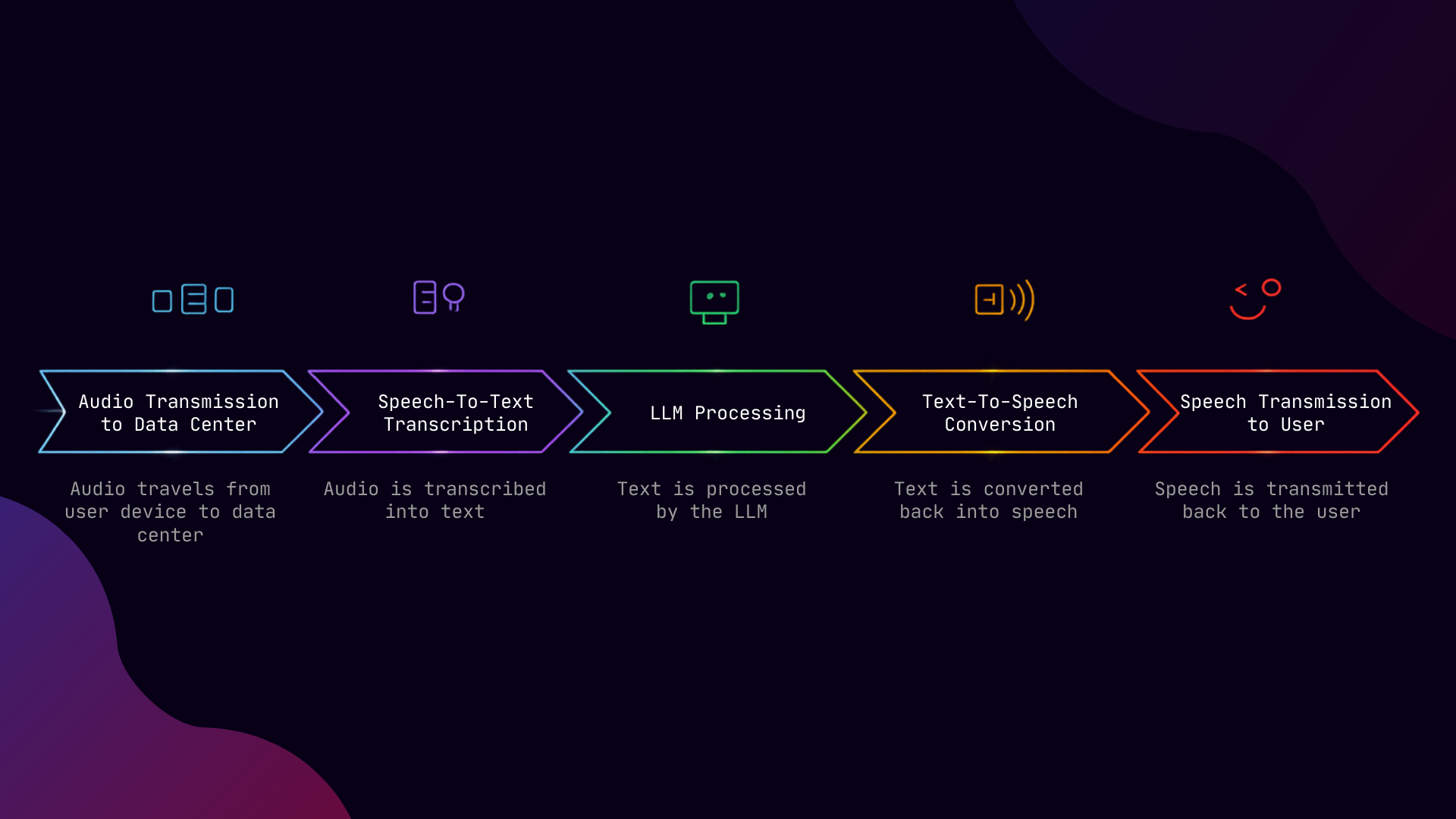

Consider a voice pipeline with three centralized models: audio travels from the user’s device to a centralized data center (100ms), gets transcribed by a speech-to-text model (STT) (100-300ms), processed by an LLM (200ms-800ms), converted back to speech (TTS) (100-300ms), then transmitted back to the user (another 100 ms). And if models are not located on the same server, you would get inter-model network hops that add 10ms-100ms latency each time. Thus, you're looking at 800ms to over 1.6 seconds before the first word reaches the user's ear.

And that's under ideal conditions. Add network jitter, queue delays, and the inevitable p99 spikes, and you get an engagement-breaking agent.

The fundamental problem is that the centralized hyperscalers were built to process language, not to converse in real-time with users.

At PolarGrid, our pre-production benchmarks show a different picture.

Our voice pipeline achieves 364ms p50 end-to-end latency, measured from audio input to audio output, including real network round-trip time. The p95 holds at 403ms, demonstrating consistent performance with minimal tail latency.

We're running Whisper for speech-to-text, Llama for language processing, and Kokoro for voice synthesis on Ada Lovelace architecture GPUs. Our production deployment on Blackwell is projected to close the gap to sub-300ms.

We're the only measurement that includes network latency because that's what users experience.

The key constraint for real-time inference is physics rather than algorithms.

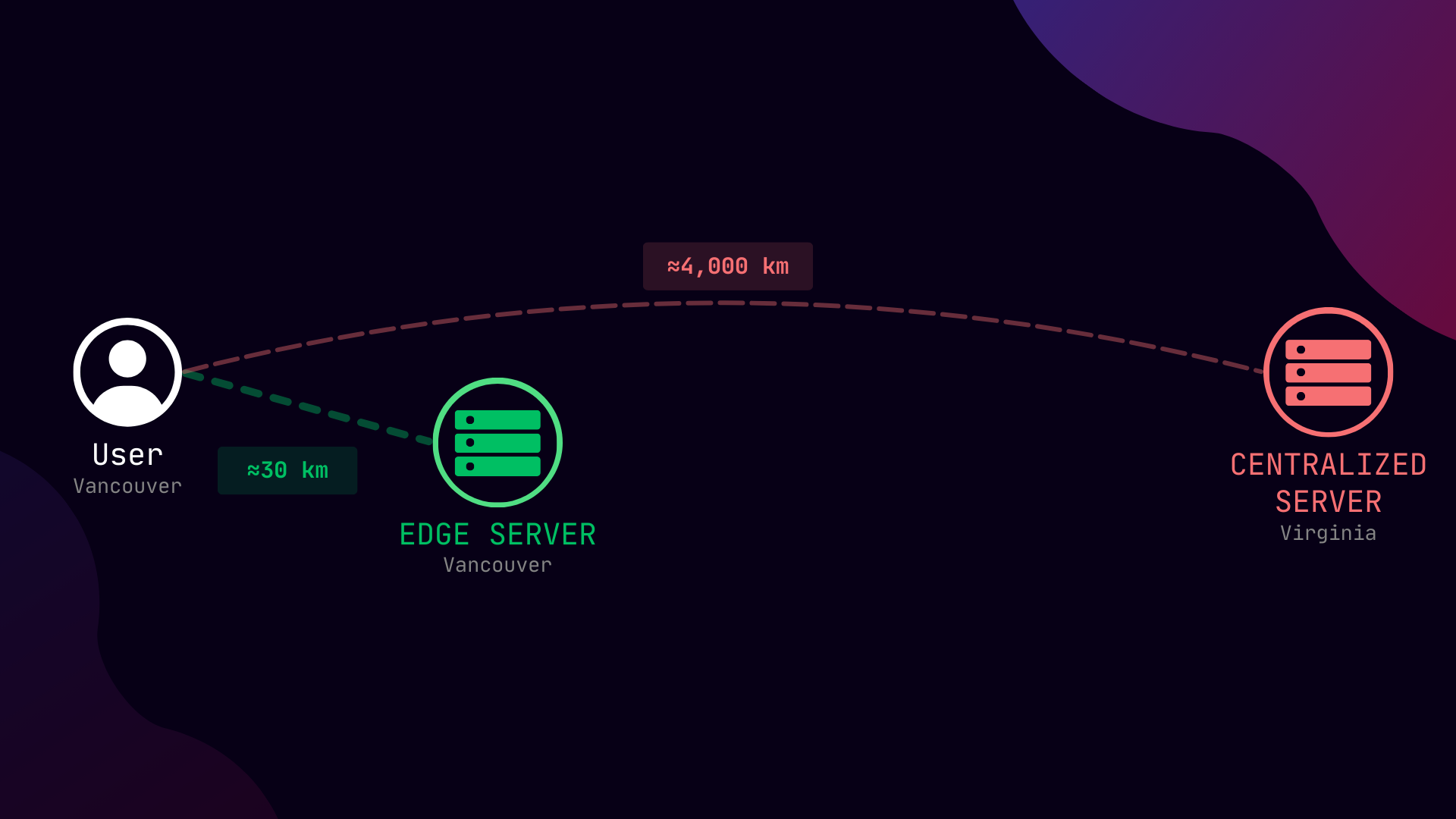

Light travels through fiber at roughly 200km per millisecond. A user in Vancouver calling a data center in Virginia faces 50-80ms of unavoidable round-trip delay before any processing begins. Do that twice (request and response) and you've waited 100-160ms based on geographical distance alone.

Edge deployment changes the equation. When inference runs close to users, whether it's in Vancouver for Vancouver customers, or in Montreal for Eastern Canada, you eliminate this speed-of-light tax. Our benchmarks were measured from a residential Vancouver connection to our Vancouver edge server. The network latency that kills centralized systems becomes negligible.

Here's what most voice AI vendors won't tell you.

Your competition is a human agent that your users could have spoken to instead of your AI. And the threshold for natural conversation was established by millions of years of evolution, not by what's technically convenient for cloud architectures.

When a user says, "voice AI feels robotic," they're not making a subjective aesthetic judgment. They're detecting a timing pattern that evolution taught them signals something is wrong with the interaction.

The platforms that win will be the ones that treat latency as a first-class constraint rather than an optimization to pursue after launch.

The path to sub-300ms voice AI requires a purpose-built stack:

Edge-first deployment. Move inference to within 50ms of your users' round-trip time. That’s the only way you can overcome the speed-of-light tax.

Streaming-first design. Output audio-ready tokens the moment they're generated. Buffering for efficiency is not appropriate for real-time inference.

Tail latency obsession. P50 is a starting point. Your p95 and p99 determine whether users experience consistent conversations or intermittent frustration. Our 403ms p95 means users rarely encounter outlier delays.

Model co-location. When your STT, LLM, and TTS run on the same node, inter-service communication drops below 10ms. Distributed systems spread across regions introduce latency at every hop.

This is the infrastructure we're building at PolarGrid. Because human conversation happens in human time, and you can't negotiate with biology.

The voice agents that feel intelligent will be the ones that respond like intelligent humans: immediately, naturally, without the pauses that signal confusion or breakdown.

364ms is close. Sub-300ms is the target. At our latency, the voice agent sitting on the shelf, the one that gave relevant responses but couldn’t match human timing, becomes a production tool that gets used. And the pause that kills it disappears.

By Sev Geraskin, Co-Founder and VP of Engineering, PolarGrid