Running Inference Across Multiple Colos Without a Central Brain

April 15, 2026

April 15, 2026

PolarGrid started with a single edge node in Vancouver, running a single model on a single GPU. A Dell PowerEdge blade server sits on my desk, with a loud fan creating ambient noise similar to an airplane engine on a transatlantic flight. The deployment process was SSH into the box, pull the container, run it, and point DNS at it. That prototype reduced inference network latency by more than 70% compared to centralized cloud routes.

When we expanded to Toronto and Montreal, we had to answer a question that dictates most of the architecture: how does a client request end up on the right GPU, and how do new models get onto the right nodes, without creating a bottleneck and having a single service in the middle that everything depends on?

We run inference across three production colocations today, and every node operates independently. There is no central orchestrator in the request path, and no failed single service that can take the whole fleet down. The rule we hold ourselves to: anything in the hot path is local to the node. Anything that can tolerate seconds or minutes of staleness can be centralized.

Each PolarGrid edge node is a bare-metal machine with NVIDIA GPUs that runs MicroK8s. The inference stack has two layers with a hard security boundary between them.

The first layer is the API gateway. It runs in a Kata container, providing VM-level isolation from the host. It faces the internet, handles TLS termination, authentication, and rate limiting. It speaks the OpenAI-compatible API: /v1/chat/completions, /v1/models, /health. It also exposes a /v1/models/load endpoint for dynamic model management.

The second layer is NVIDIA Triton Inference Server. Triton has direct GPU access via the NVIDIA runtime but has no external network exposure. A Kubernetes network policy blocks all traffic to Triton except from the gateway's namespace. External requests hit the gateway; the gateway forwards to Triton over the internal cluster network. Triton runs inference on the GPU and returns the result.

This separation matters because it means the component touching the internet has no GPU access, and the component with GPU access cannot be reached from the internet. The attack surface of a public-facing inference endpoint drops substantially when you physically split the security boundary from the compute boundary.

When we had one node, model deployment was straightforward. Now we run different models on different nodes with different GPU configurations, and the deployment mechanism had to accommodate that without a central coordinator pushing changes.

Each node runs deployment scripts that handle the full lifecycle: validate prerequisites (MicroK8s, Kata runtime, GPU drivers, minimum 100GB disk), build Docker images for the gateway and Triton backend, create Kubernetes secrets for JWT signing keys and HuggingFace tokens, apply the deployment manifests via kubectl, and wait for health checks to pass.

Models themselves are loaded dynamically via the gateway. The gateway exposes POST /v1/models/load endpoint, which accepts a Hugging Face model identifier. When called, the gateway downloads the model, converts it to Triton's format (generating the config.pbtxt and model files), and writes the resulting files to a shared volume. A sidecar container running alongside Triton watches that volume. When it detects a new model directory with a valid config, it copies the files into Triton's model repository and triggers a reload via Triton's native functionality.

The result is that model deployment is an API call to the node itself. No central service needs to know about it. An operator or an automated pipeline can load a model onto a specific node by directly hitting that node's gateway. Different nodes can run entirely different model sets based on their hardware, their tenants, or their geographic purpose.

For multi-GPU nodes, model-to-GPU pinning is configured through a configuration file on the host. A node with four GPUs might pin Whisper (STT) to GPU 3, a Llama 70B across GPUs 0-2, and the voice synthesis model to GPU 3 alongside Whisper. The deployment script reads this config and allocates GPU resources to Triton accordingly. Getting this right was harder than it sounds. We went through several iterations of GPU pinning strategies before landing on one that reliably prevents models from starving each other for GPU memory. In early April, we put in multiple hotfixes, each addressing a different GPU contention scenario we discovered under production load.

While the node architecture handles the "how does inference run" question, our routing architecture handles "how does a client request reach the right node in the first place."

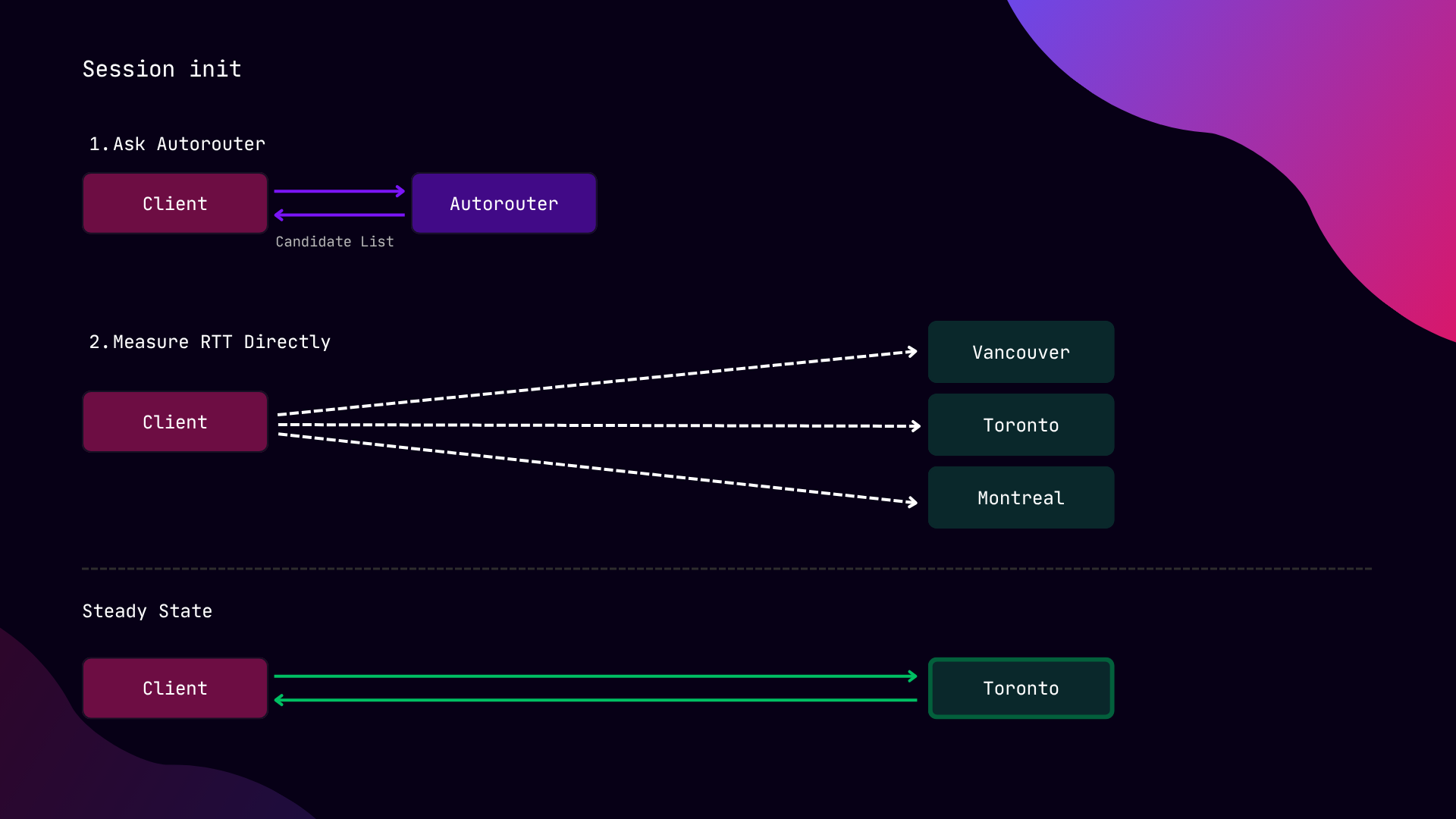

PolarGrid's autorouter is a Lambda function behind our API Gateway. When a client starts a session, the SDK calls the autorouter's /v1/route endpoint. The autorouter queries our edge_servers table in the database for all enabled nodes, their DNS addresses, ports, and geographic coordinates, and caches the result in memory for 5 minutes (surviving across warm Lambda invocations). The response is a list of available regions with their endpoints.

In PolarGrid’s distributed architecture, the autorouter is a decision service rather than a proxy. It tells the client where to go rather than forwarding the request.

After receiving the candidate list, the SDK pings each node's /health endpoint in parallel to measure the actual round-trip time from the client's network position. This measurement gives us the actual measured latency from that user on that network at that moment, rather than a DNS resolver's approximation. A user in Toronto on a congested ISP might find that Montreal is 8ms faster than the Toronto node physically closer to them. The SDK will pick Montreal.

Once the SDK selects a node, it pins to that endpoint for the duration of the session. Every subsequent inference call, whether it is a chat completion, a transcription, or a TTS request, goes directly from the client to the edge node. The autorouter is not in the loop, and no central proxy sits in the data path.

If the pinned node becomes unreachable, the SDK falls back to the next-best candidate from its cached list. If the cached list is stale, it re-probes. The failover decision is made on the client side, where network conditions are observed firsthand, not by a central health checker that sees the network from a different vantage point.

For this routing architecture to work, each node has to report enough about itself for clients and operators to make informed decisions.

The edge API server exposes a /health endpoint that checks the Triton backend's liveness and reports the node's identity, runtime type, and feature flags. The SDK uses this during discovery.

Every inference response follows the standard OpenAI format: id, model, choices, and usage with token counts. Each request passes through a context that captures structured telemetry: request ID, organization and project IDs, model, service type (LLM, STT, TTS, voice agent, vision), input and output token counts, total duration, time-to-first-byte, node ID, GPU ID, region, and whether the response was successfully delivered.

These events are flushed to a centralized location every five seconds, partitioned by timestamp and region. The telemetry layer includes schema validation at ingestion, a retry queue for failed uploads, disk spill on shutdown, crash recovery on startup, and periodic heartbeat events for gap detection across the fleet. The metadata emission is fire-and-forget, so it never breaks request handling.

The autorouter returns all available regions, but clients often need to know which models are available in which regions. Not every node runs every model.

Our inference API has a hot database-backed model registry, keyed by model ID and region. It stores model type, parameter count, max token length, per-region latency characteristics, and pricing. When the SDK or the dashboard queries /v1/models, the response can be filtered by the region header to show only what is loaded on a specific node.

This matters for voice pipelines that need a specific model chain: Whisper for STT, Llama for reasoning, Kokoro for TTS. The routing layer can filter candidates down to nodes that have the full set loaded, rather than routing to a node that is fast but missing a model the pipeline needs.

Running three colos without a central brain is not free. Here are the hard parts:

Fleet heterogeneity. Each node has its own deployment, its own model set, its own GPU configuration. Deploying a new model to all three nodes means three separate operations, whether triggered manually or by a pipeline. This is a feature when you want incremental rollouts, and a pain when you want fleet-wide consistency in a hurry.

Observability without centralized control. Each node is autonomous, which is good for resilience and difficult for the operator who wants to know "what is the fleet doing right now." We aggregate Prometheus metrics and logs, but assembling a fleet-wide view without accidentally rebuilding a central brain is a problem in itself. The principle is that the observability pipeline is read-only: it can see everything but cannot issue commands.

GPU contention under production load. Multi-GPU pinning is deterministic in theory. In practice, we discovered edge cases in which Triton's GPU allocation and Kubernetes device plugin requests conflicted, leading to model starvation. The fix was a combination of explicit GPU pinning in our config, careful deployment ordering (waiting for one model to reach GPU reservation before deploying the next), and removing features that sounded useful in development but created race conditions in production.

Stateful session migration. When a voice session is pinned to a node, and that node degrades, the SDK can fail over to the next available node for the next request. But a true mid-conversation migration, moving KV cache, conversation history, and audio buffers to a different node, is genuinely hard. For a 70B model mid-turn, the KV cache alone is gigabytes of GPU memory, and shipping that between colos incurs more latency than the entire budget we're trying to protect. Our current approach is graceful degradation: finish the current turn on the degrading node, then switch to a healthy node before the next one. This is an open problem we are actively working on.

PolarGrid currently operates edge nodes in Toronto, Montreal, and Vancouver. The prototype that shipped last year reduced inference network latency by more than 70 percent compared to centralized cloud routes. The architecture described here is what makes that result hold across multiple colos rather than requiring a single well-placed node.

A few principles that have survived contact with production:

Each node is a self-contained inference unit. Gateway, Triton, GPU, models, certs, secrets. If the rest of the infrastructure were to disappear, the node would continue serving the traffic it already has.

Route once, then get out of the way. The autorouter makes one decision per session. After that, the client talks directly to the GPU. Two orders of magnitude less load on the routing layer, and no central proxy adding latency to every token.

The client is the best observer of its own network. Delegating the final routing decision to the SDK, which can measure actual latency from its specific network position, produces better outcomes than any server-side heuristic.

Heterogeneity is the natural state. Different nodes run different models on different hardware with different GPU layouts. Fighting that with a centralized brain that enforces uniformity would be fighting the physics of edge deployment.

As we scale toward 10 to 15 nodes by the end of the year and a longer-term footprint across North America, each added node is an independent capacity unit rather than an additional load on the coordinator.

Sev Geraskin, Co-Founder and VP of Engineering, PolarGrid